Debugging Docker performance issues on Dell XPS 15 7590

We recently bought a new set of laptops to Debricked, and since I’m a huge fan of Arch Linux, I decided to use it as my main operating system.

The laptop in question, a Dell XPS 15 7590, is equipped with both an Intel CPU with built-in graphics, as well as a dedicated Nvidia GPU. You may wonder why this is important to mention, but at the end of this article, you’ll know. For now, let’s discuss the performance issues I quickly discovered.

Docker performance issues

Our development environment makes heavy use of Docker containers, with local folders bind mounted into the containers. While my new installation seemed snappy enough during regular browsing, I immediately noticed something was wrong when I had setup my development environment. One of the first steps is to recreate our test database. This usually takes around 3-4 minutes, but I can admit I was eagerly looking forward to benchmark how fast this would be on my new shiny laptop.

After a minute I started to realize that something was terribly wrong.

16 minutes and 46 seconds later, the script had finished, and I was disappointed. Recreating the database was almost five times as slow as on my old laptop! Running the script again using time ./recreate_database.sh, I got the following output:

real 16m45.615s user 2m16.547s sys 13m3.622s

What stands out is the extreme amount of time spent in kernel space. What is happening here? A quick check on my old laptop, for reference, showed that it only spent 4 seconds in kernel space for the same script. Clearly something was way off with the configuration of my new installation.

Debug all things: Disk I/O

My initial thought was that the underlying issue was with my partitioning and disk setup. All that time in the kernel must be spent on something, and I/O wait seemed like the most likely candidate. I started by checking the Docker storage driver, since I’ve heard that the wrong driver can severely affect the performance, but no, the storage driver was overlay2, just as it was supposed to be.

The partition layout of the laptop was a fairly ordinary LUKS+LVM+XFS layout, to get a flexible partition scheme with full-disk encryption. I didn’t see a particular reason why this wouldn’t work, but I tested several different options:

- Using ext4 instead of XFS,

- Create an unencrypted partition outside LVM,

- Use a ramdisk

After using a ramdisk, and still getting an execution time of 16 minutes, I realised that clearly disk I/O can’t be the culprit.

What’s really going on in the kernel?

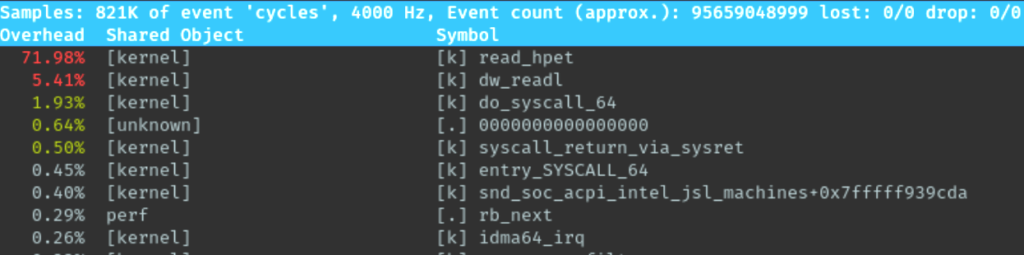

After some searching, I found out about the very neat perf top tool, which allows profiling of threads, including threads in the kernel itself. Very useful for what I’m trying to do!

Firing up perf top at the same time as running the recreate_database.sh script yielded the following very interesting results, as can be seen in the screenshot below.

That’s a lot of time spent in read_hpet which is the High Precision Event Timer. A quick check on my other computers showed that no other computer had the same behaviour. Finally I had some clue on how to proceed.

The solution

While reading up on the HPET was interesting on its own, it didn’t really give me an immediate answer to what was happening. However, in my aimless, almost desperate, searching I did stumble upon a couple of threads discussing the performance impact of having HPET either enabled or disabled when gaming.

While not exactly related to my problem – I simply want my Docker containers to work, not do any high performance gaming – I did start to wonder which of the graphics cards that was actually being used on my system. After installing Arch, the graphical interface worked from the beginning without any configuration, so I hadn’t actually selected which driver to use: the one for the integrated GPU, or the one for the dedicated Nvidia card.

After running lsmod to list the currently loaded kernel modules, I discovered that modules for both cards were in fact loaded, in this case both i915 and nouveau. Now, I have no real use for the dedicated graphics card, so having it enabled would probably just draw extra power. So, I blacklisted the modules related to nouveau by adding them to /etc/modprobe.d/blacklist.conf, in this case the following modules:

blacklist nouveau blacklist rivafb blacklist nvidiafb blacklist rivatv blacklist nv

Upon rebooting the computer, I confirmed that only the i915 module was loaded. To my great surprise, I also noticed that perf top no longer showed any significant time spent in read_hpet. I immediately tried recreating the database again, and finally I got the performance boost I wanted from my new laptop, as can be seen below:

real 2m36.722s user 1m39.528s sys 0m2.674s

As you can see, almost no time is spent in kernel space, and the total time is now faster than the 3-4 minutes of my old laptop. Finally, to confirm, I whitelisted the modules again, and after a reboot the problem was back! Clearly the loading of nouveau causes a lot of overhead, for some reason still unknown to me.

Conclusion

So there you go, apparently having the wrong graphics drivers loaded can make your Docker containers unbearably slow. Hopefully, this post can help someone else in the same position as me to get their development environment up and running at full speed.